Why the best reconciliation systems treat mismatches as explicit state transitions – not inference problems – and how AWS Step Functions, Bedrock, and DynamoDB create a pipeline that is fast, auditable, and safe to operate at scale.

Payment reconciliation is one of those back-office functions that looks solved until it breaks. Every payment platform has one. Most are built on a combination of scheduled batch jobs, spreadsheets, and accumulated tribal knowledge about which edge cases to handle manually on Tuesday mornings. The result is a process that works – until transaction volumes grow, payment channels multiply, or a regulatory audit demands a complete lineage trail for every exception resolution.

The reconciliation software market reflects how urgently this is changing.

According to Research and Markets, the sector is projected to grow from $2.8 billion in 2024 to $5.45 billion by 2029 at a 13.2% CAGR, driven by demand for real-time transaction visibility, AI-driven matching, and API-based fintech integration. The accounts receivable automation segment, which encompasses reconciliation as a core function, is growing faster still – from $4.25 billion in 2025 toward $10.1 billion by 2032.

But market growth data understates the operational pressure. According to Kani Payments’ 2025 Reconciliation and Reporting Survey of 250 UK payment businesses, not a single firm reported an error-free reconciliation process. 56% still rely on spreadsheets as a core tool, with 94% of those struggling to meet reporting deadlines. The average firm spends three hours preparing data before reconciliation even begins.

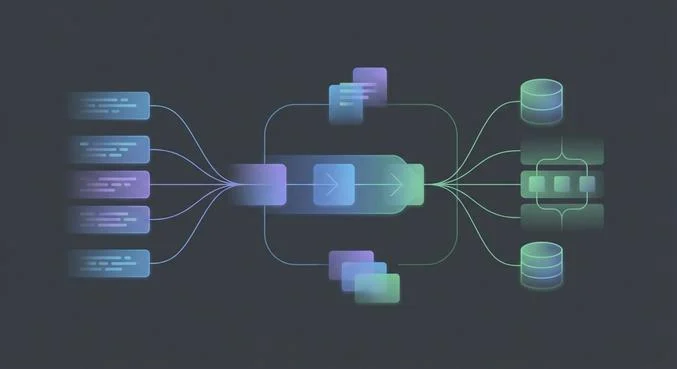

This article walks through the architecture Sphere uses to solve this at production scale on AWS: a three-layer system that separates deterministic ledger logic from AI-assisted ambiguity resolution, with event-driven orchestration that makes every exception observable, auditable, and recoverable.

Reconciliation Is Not an AI Problem

The most common architectural mistake in reconciliation automation is treating it as a reasoning challenge – something to be solved by building a smarter matching algorithm or deploying a large language model to interpret discrepancies. This framing leads to systems that are fragile in production, difficult to audit, and dangerous in regulated environments.

Reconciliation failures are structurally different from reasoning failures. They are almost never caused by a system that cannot understand a transaction. They are caused by:

- Inconsistent identifiers across payment processors, banks, and internal ledgers

- Timing mismatches between settlement dates and booking dates

- Partial records from upstream systems that batch at different frequencies

- Formatting variance in reference fields, currency codes, and amount representations

- Fragmented data lineage across multi-hop payment chains

These are disambiguation problems, not reasoning problems. The distinction matters architecturally because disambiguation problems require deterministic resolution – a confirmed answer that is committed to ledger state – while reasoning problems produce probabilistic hypotheses that must be validated before any state change.

Reconciliation agents reduce cognitive entropy. They do not own financial truth.

A system that lets an AI model silently correct a mismatch is structurally unsafe. If the model is wrong – and it will be, at some non-zero rate – the error propagates into ledger state without a visible decision point, an audit record, or a recovery path. The costs of that failure asymmetry are severe: regulatory exposure, financial restatements, and loss of counterparty trust.

Market context: In 2024, 80% of executives reported losing business due to payment errors, according to PYMNTS research. Payment errors caused 61% of late payments, per the Credit Research Foundation. The Synapse fintech collapse in June 2024 – which left 100,000 customers unable to access savings and an $85M reconciliation shortfall – illustrated the systemic consequences of inadequate reconciliation controls. The full post-mortem is instructive reading for any team designing a new reconciliation architecture.

The Three-Layer Architecture

A stable, production-grade reconciliation system separates three concerns that are frequently collapsed into a single pipeline: deterministic state management, probabilistic inference, and durable transaction lineage. Collapsing them produces nondeterministic behavior that is extremely hard to test, debug, or satisfy in an audit.

Layer 1 – Deterministic Layer: Balance Checks, Ledger Mutations, Correctness Validation

The deterministic layer retains exclusive authority over all operations that modify financial state. This means balance reconciliation checks, ledger mutation commits, settlement validation, and regulatory reporting aggregation. Nothing in this layer accepts probabilistic outputs directly. Every write to the ledger is preceded by a confirmed validation – a yes/no answer from a rules engine, not a confidence score from a model.

AWS Lambda functions serve as the execution units for deterministic business logic: formatting transformations, identifier normalization, balance assertion checks, and rule-based auto-match for clean transactions (same amount, same reference, within tolerance). Amazon Aurora or DynamoDB holds the authoritative ledger state with strong consistency guarantees. For high-volume pipelines, Sphere’s engineers have validated the pattern documented by Lead Bank’s staff engineering team – a hybrid Lambda/ECS Fargate approach that handles large batch files via deterministic write sharding to avoid DynamoDB hot partition throttling under load.

Layer 2 – Inference Layer: Matching Hypotheses, Anomaly Classification, Semantic Interpretation

The inference layer handles the subset of transactions that the deterministic layer cannot resolve: records where identifiers are inconsistent across sources, where amounts differ by fees or FX rounding, where timing gaps require contextual interpretation, or where reference field formatting prevents clean matching.

Amazon Bedrock agents operate here, with access to reconciliation context retrieved from a knowledge base: matching rules, counterparty formatting conventions, known timing offset patterns, and historical resolution decisions for similar exception types. The agent produces a candidate resolution – a proposed match, a proposed rejection reason, or a proposed escalation – but does not commit it.

This is the architectural boundary that most failed reconciliation automation projects violate: agents propose, validators confirm. Every agent output is passed to the deterministic layer for acceptance or rejection. If the agent’s confidence is below threshold, or if the deterministic validator rejects the proposed match, the exception routes to an explicit human review state. Silent auto-correction is structurally prohibited.

Sphere’s AWS practice: As an AWS Advanced Partner, Sphere designs the full integration stack – Bedrock agents, Step Functions orchestration, Lambda validators, and DynamoDB state stores – with the audit logging and governance scaffolding that financial services regulators require. See our AWS cloud capabilities and AI solutions for financial services.

Layer 3 – State Layer: Durable Transaction Lineage, Reconciliation Context, Retry Anchors

State externalization is non-negotiable in reconciliation systems. Reconciliation workflows are inherently stateful: the resolution of a mismatch today depends on what happened to similar mismatches last week, what the counterparty’s known formatting behavior is, and what the current open exception queue looks like. A stateless inference system has no access to this context and degrades severely under retry and failure scenarios.

DynamoDB serves as the durable state store for the reconciliation agent: each mismatch is written as an explicit record with a status field (OPEN, IN_REVIEW, RESOLVED, REJECTED, ESCALATED), a full lineage of all inputs and outputs, and a retry anchor that allows failed workflows to resume from the point of failure rather than restart from scratch. This is the layer that makes the system replayable – a requirement for audit and for disaster recovery.

Event-Driven Orchestration: Mismatches as State Transitions

The orchestration pattern that dominates successful reconciliation implementations is event-driven, not batch-polling. Every mismatch becomes an explicit state transition on an event bus rather than an implicit correction inside a loop. This is not an architectural preference – it is what makes the system observable, auditable, and recoverable at production scale.

AWS EventBridge serves as the event backbone. When a transaction ingestion Lambda publishes a MISMATCH_DETECTED event, EventBridge routes it to an SQS queue that triggers an AWS Step Functions state machine. The state machine owns the full reconciliation workflow for that exception: AWS’s guidance on building event-driven payment systems documents this pattern in detail, including the use of Dead Letter Queues for failed processing, CloudTrail for API-level audit, and X-Ray for tracing execution paths across Lambda and Step Functions.

Step Functions provides what language models and Lambda functions cannot: ordered execution with deterministic branching, explicit timeout handling, bounded retry semantics, and a visual execution history that operations teams can inspect in real time. When the Bedrock agent invocation times out or returns a low-confidence output, Step Functions routes the exception to a defined state – ESCALATED or PENDING_REVIEW – rather than silently retrying or cascading.

This event-driven design has a critical audit property: every state transition is a logged event with a timestamp, an actor (Lambda function, Bedrock agent, or human reviewer), and a full input/output record. When a regulator asks how a specific exception was resolved six months ago, the answer is in EventBridge’s event archive, not in someone’s memory.

Architecture reference: AWS has published a detailed reference architecture for event-driven invoice processing and financial monitoring at scale – including the cellular architecture pattern for independent scaling and the EventBridge/SNS/SQS fanout model for high-volume ingestion. See: Implement event-driven invoice processing for resilient financial monitoring at scale

Observability: What Standard Metrics Miss

Reconciliation systems fail slowly. A matching algorithm that silently accepts borderline matches at 0.3% error rate looks fine in dashboards until quarter-close when the accumulated drift surfaces as a material balance discrepancy. Standard infrastructure metrics – Lambda invocation counts, Step Functions execution durations, DynamoDB read/write units – do not catch this class of failure.

Production reconciliation observability requires a second tier of instrumentation that captures inference-specific signals:

- Agent inputs and outputs for every Bedrock invocation – not just success/failure, but the full context passed and the full resolution proposed

- Decision contexts: what knowledge base documents were retrieved, what matching rules were applied, what confidence threshold was evaluated

- Validation outcomes: how many agent proposals were accepted vs. rejected by the deterministic layer, and the distribution of rejection causes

- Exception aging: how long each OPEN exception has been in queue, with alerts for mismatches that exceed SLA thresholds

- Match accuracy drift: a rolling metric on the human override rate for agent-proposed matches, which is the leading indicator of model degradation

CloudWatch custom metrics handle the infrastructure signals. The inference-specific signals require dedicated logging from each Lambda function that interacts with the Bedrock agent, structured as JSON events that can be queried in CloudWatch Logs Insights or streamed to a data warehouse for trend analysis.

Sphere’s financial services engineering experience – including our fraud detection work with a major bank using AWS Lambda, Kinesis, and SageMaker – has reinforced that observability is not a post-launch concern. Systems that instrument inference behavior from day one are significantly cheaper to operate and dramatically easier to satisfy in regulatory reviews than those that retrofit logging after the first audit finding.

Anti-Patterns That Appear in Production

Reconciliation automation projects that stall or regress in production almost always exhibit one of the following structural problems. Each is identifiable early if the architecture review asks the right questions.

Silent Auto-Correction

The most dangerous pattern: an AI agent or matching algorithm writes directly to ledger state without a deterministic validation gate. This produces a system that appears to work – match rates look high, exception queues look empty – while accumulating errors that surface only during period-close or audit. The fix is architectural: the inference layer must never write directly to the deterministic layer. All agent outputs pass through an explicit validation state.

Stateless Inference Under Retry

When reconciliation workflows retry after failure, a stateless inference system starts from scratch. It may propose a different resolution than it did on the first attempt, creating inconsistency in the lineage record. DynamoDB’s retry anchors solve this: on retry, the workflow loads the existing exception record and resumes from the last confirmed state, not from the beginning. The agent sees the same context it saw before, producing a reproducible output.

Batch Polling Instead of Event-Driven Processing

Polling-based reconciliation – a scheduled Lambda that scans for unmatched records every N minutes – introduces inherent latency and masks the relationship between specific upstream events and their downstream effects. It also creates load spikes at polling intervals that can cause DynamoDB throttling at scale. Event-driven architectures, as described in the AWS payment systems guidance, eliminate this by processing each mismatch immediately as a discrete event with its full context preserved.

Collapsed Responsibility Boundaries

Letting the Step Functions workflow call business logic decisions, or letting the Bedrock agent sequence its own workflow steps, or using Lambda for both stateful ledger writes and stateless inference – any of these collapses the separation between layers and makes the system behavior unpredictable under failure. The test: can you change the matching model without touching the ledger commit logic? If not, the architecture is entangled.

Related reading: Sphere’s broader AI engineering practice covers failure mode analysis and governance architecture for agentic systems in financial services. Our AI Solutions practice engages these problems with a structured methodology: use-case validation, explicit failure state design, and observability from day one.

Implementation Roadmap: Four Phases to Production

Phase 1 – Data Mapping and Exception Taxonomy (Weeks 1–3)

Before any infrastructure is provisioned, define the complete taxonomy of exception types: identifier mismatches, timing mismatches, amount variances within tolerance, amount variances outside tolerance, missing records, and duplicate records. Assign each type a default resolution path – auto-match, agent review, or immediate human escalation. This taxonomy becomes the branching logic in the Step Functions state machine and the evaluation criteria for the deterministic validation layer.

- Map all data sources: payment processor feeds, bank statements, internal ledger format

- Document identifier schemas across each source and define normalization rules

- Define match tolerance thresholds by transaction type and counterparty

- Establish exception SLA targets: time-to-review and time-to-resolve by severity tier

Phase 2 – Deterministic Pipeline (Weeks 4–7)

- Deploy EventBridge event bus, SQS queues with DLQs, and Step Functions state machine skeleton

- Build Lambda ingestion functions for each data source with identifier normalization

- Implement auto-match rules for clean transactions (exact match on amount, reference, date within tolerance)

- Set up DynamoDB exception ledger with status fields and retry anchor structure

- Configure CloudWatch dashboards and alerts for infrastructure metrics

Validate this phase in isolation before introducing any inference components. Run historical exception data through the deterministic pipeline and measure match rates. The deterministic layer should resolve 60–80% of exceptions without AI involvement in a well-governed payment environment.

Phase 3 – Inference Integration (Weeks 8–12)

- Integrate Bedrock agents for the exception subset that deterministic rules cannot resolve

- Build knowledge base with counterparty formatting conventions, known timing offset patterns, and historical resolution examples

- Implement validation gate: every agent output passes through a deterministic confirmation step before ledger write

- Add human review workflow: Step Functions .waitTaskToken pattern for ESCALATED exceptions

- Deploy inference-layer observability: structured logging of agent inputs, outputs, confidence, and validation outcomes

Phase 4 – Hardening and Scale (Weeks 13–18)

- Run variance testing: delayed feeds, partial records, contradictory amounts, formatting edge cases

- Implement write sharding for DynamoDB if transaction volumes exceed 10K/day per entity

- Add ECS Fargate path for large batch file processing (Lambda 15-minute timeout mitigation)

- Establish model accuracy drift monitoring: rolling 7-day human override rate with alert thresholds

- Complete compliance documentation: full lineage trail for sample exceptions across each resolution path

Conclusion: Build the State Machine Before the Model

The reconciliation agent architecture that holds up in production is the one that treats financial state as sacrosanct and AI inference as a useful but probabilistic contributor. The state machine comes first. The deterministic validation gate comes second. The Bedrock agent operates within those constraints – never outside them.

This sequencing matters because the failure modes of each layer are different. Deterministic systems fail in predictable, detectable ways: a rule is wrong, a threshold is misconfigured, a data format changes. Inference systems fail probabilistically and gradually: match accuracy drifts, edge cases accumulate, silent misclassifications compound into material discrepancies. Treating them identically in your architecture is how reconciliation systems end up requiring a team of six people to manage exceptions that were supposed to be automated.

The market pressure to modernize reconciliation infrastructure is real and growing. The reconciliation software market is expanding at over 13% annually. Regulatory mandates around real-time settlement visibility are tightening. Transaction volumes are increasing faster than headcount can. The organizations that build this architecture correctly – deterministic state management, bounded inference, event-driven orchestration, and inference-specific observability – will process more volume with less operational overhead and satisfy audits with confidence.

Sphere brings AWS Advanced Partner-level architecture expertise and deep financial services engineering experience to these engagements. If you are designing a payment reconciliation system from scratch, or stabilizing one that has grown beyond its original architecture, our team is ready to help. Explore our AWS cloud practice, our financial services consulting capabilities, and our FinTech engineering practice.